About Me 🌲

Hi, I am a third-year Ph.D. student at the University of New South Wales (UNSW Sydney), supervised by Dr. Dong Gong, Dr. Alan Blair, and Prof. Lina Yao. Before that, I received my MPhil degree from the Australian Institute for Machine Learning (AIML), the University of Adelaide, supervised by A/Prof. Qi Wu and Dr. Yuankai Qi. I obtained my Bachelor’s degree from Beijing Jiaotong University, where I was advised by Prof. Runmin Cong.

My research interests lie in multimodal learning and continual learning, with a focus on continually improving LLMs/LVLMs to acquire new knowledge while mitigating forgetting. I am particularly interested in dynamic mixture of experts (Dynamic MoE) architectures and parameter-efficient fine-tuning (PEFT) for building scalable and adaptive LVLMs. My work also extends to vision-language pretraining, embodied AI, and multimodal reasoning.

Currently, I am exploring unified multimodal models (UMMs) that integrate visual understanding and generation.

News 🔥

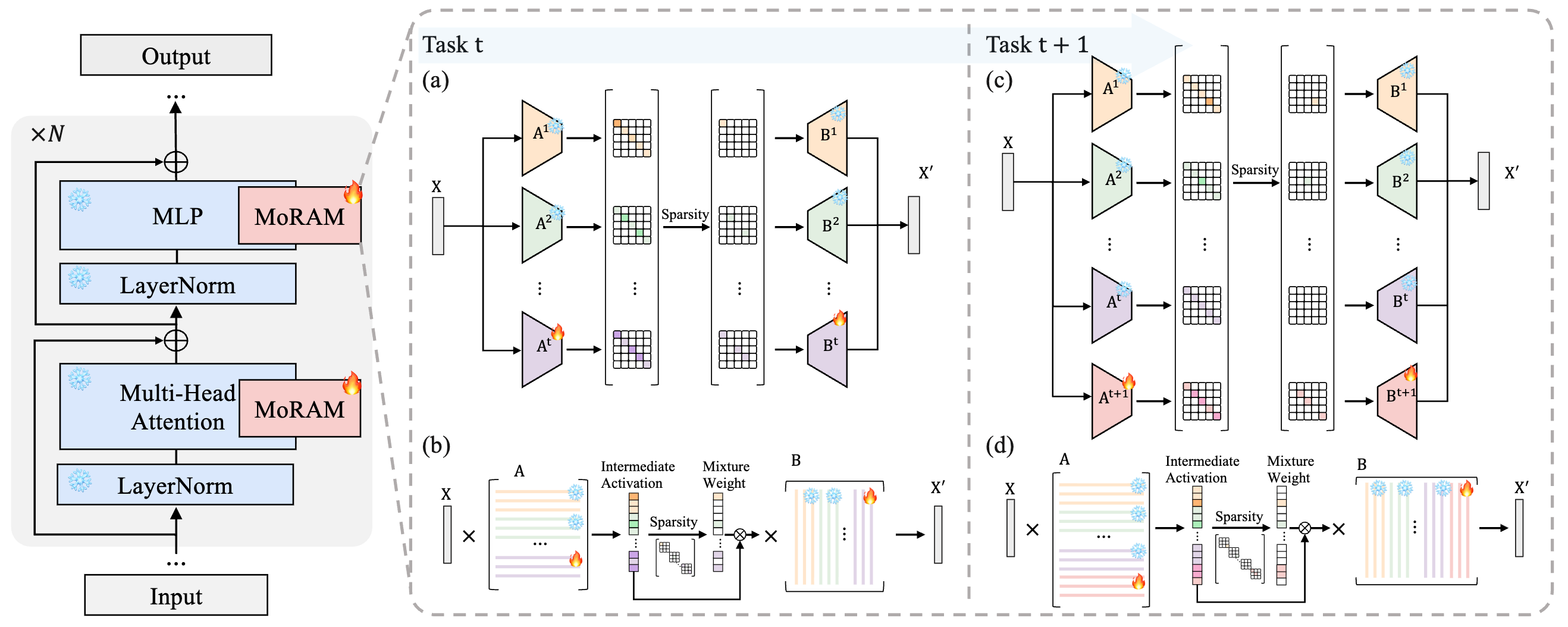

May 2026MoRAM is accepted to ICML 2026. Congratulations to Jeff! Thanks to all collaborators!

Continual learning via incremental sparse Mixture of Rank-1 Associative Memory experts.Feb 2026DyMoE is accepted to CVPR 2026. Thanks to all collaborators!

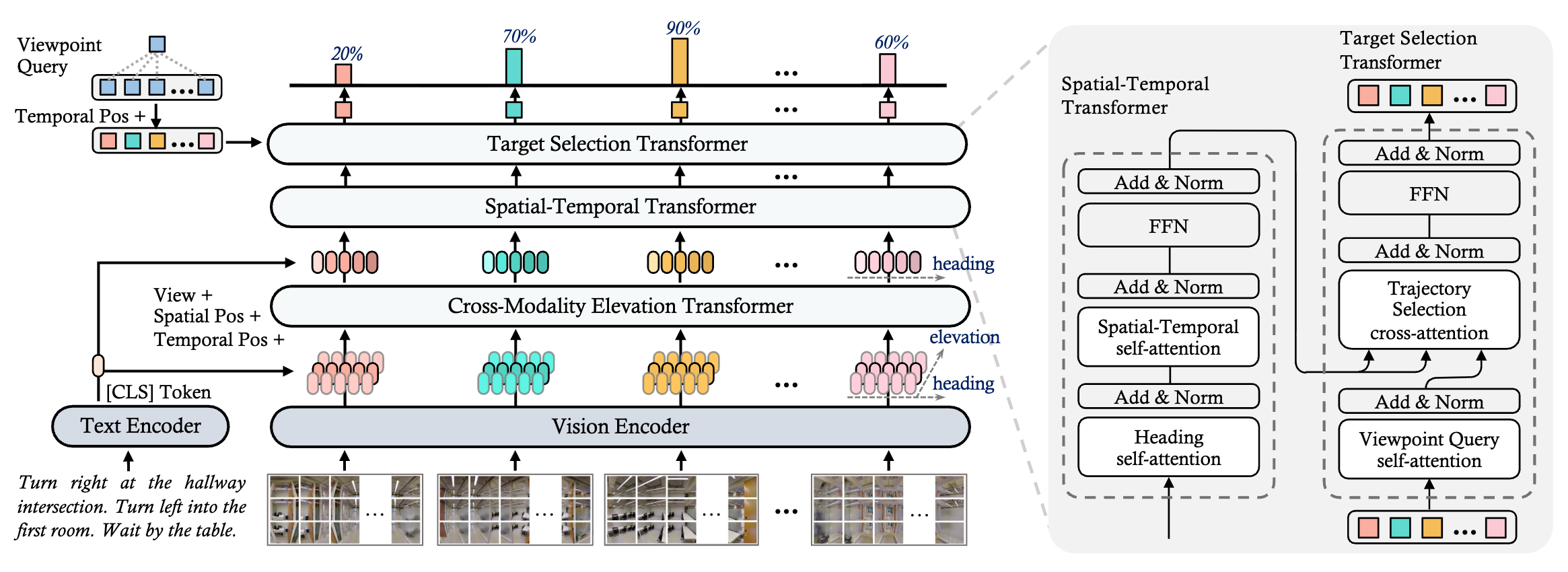

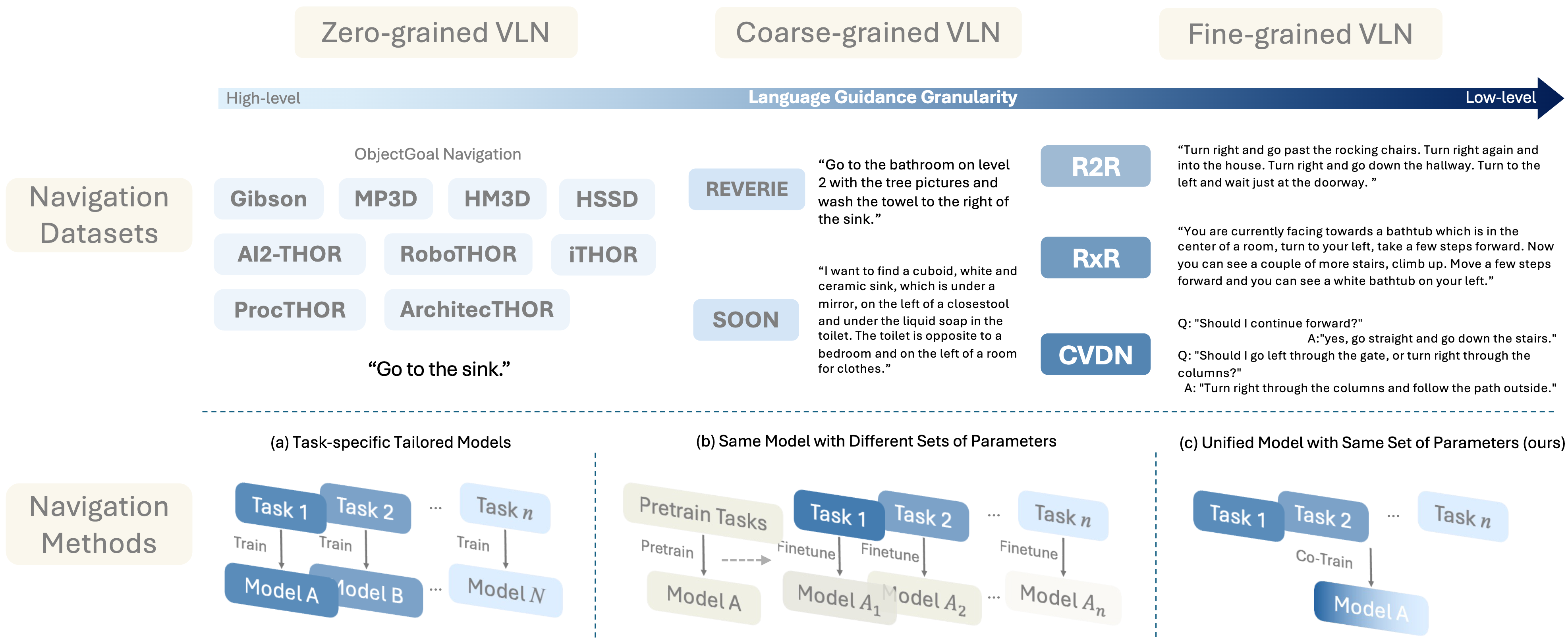

“On Token’s Dilemma” — Continual learning Dynamic MoE for LVLMs w/ token-level filtering.Jun 2025SAME is accepted to ICCV 2025. Congratulations to Gengze! Thanks to all collaborators!

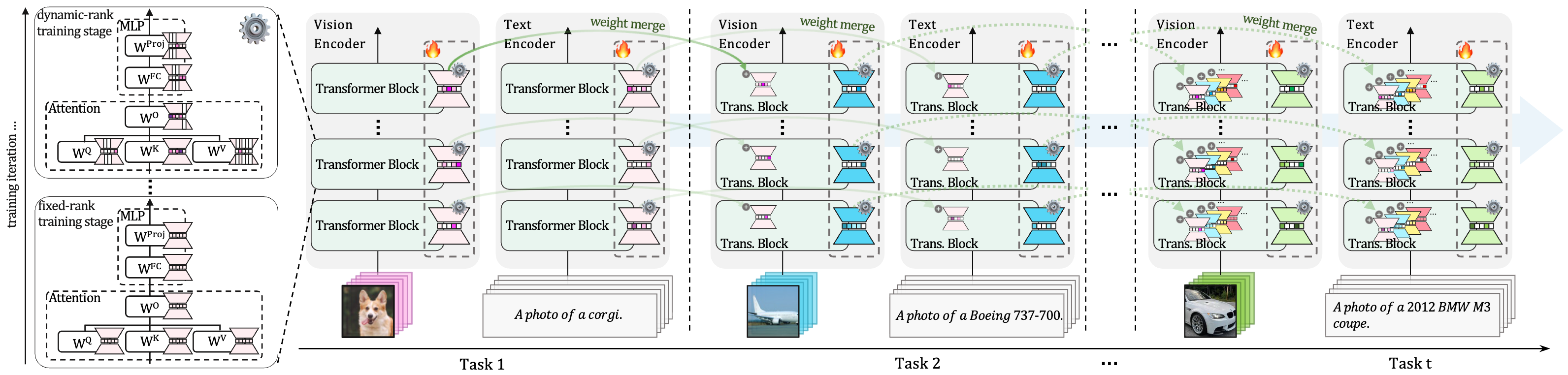

State-adaptive MoE for generic language-guided visual navigation.Dec 2024New preprint! Check CoDyRA on arXiv!

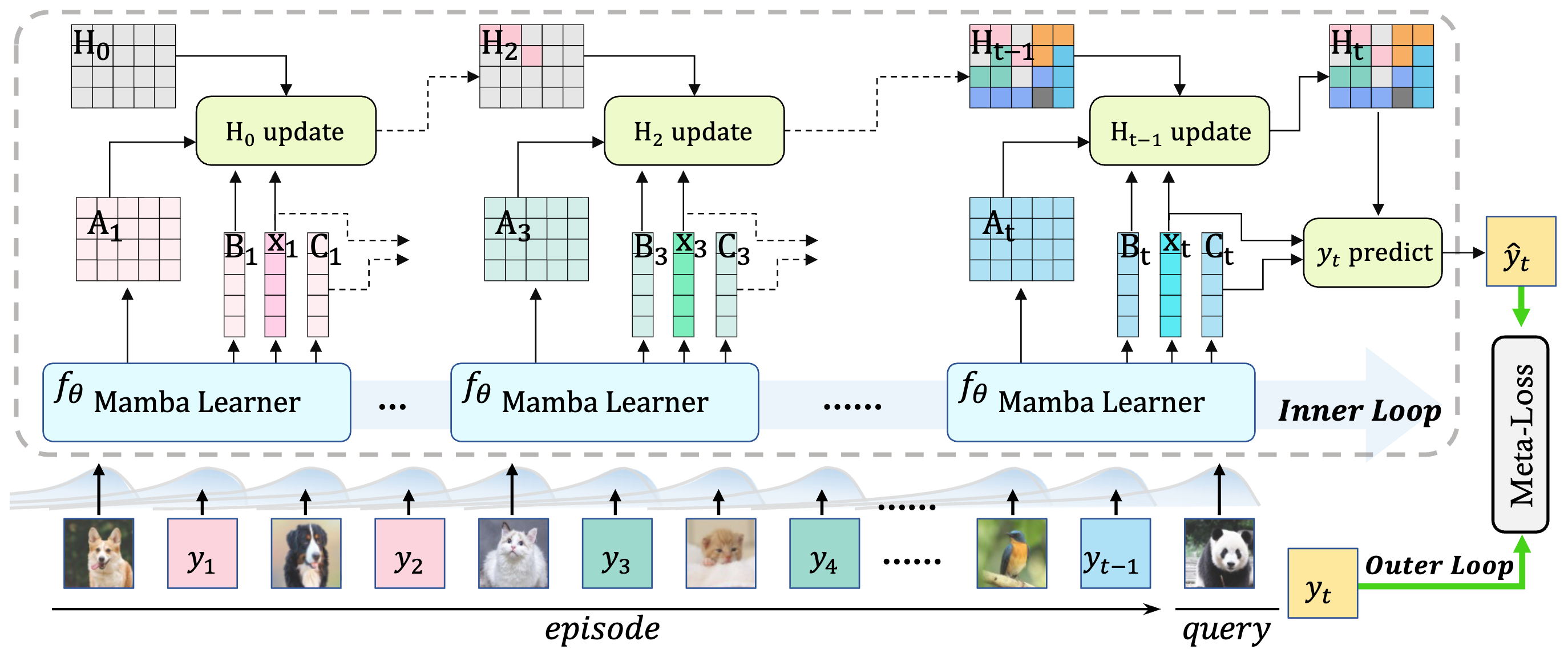

Dynamic rank-selective LoRA for knowledge retention in continual learning VLMs.Dec 2024New preprint! Check MambaCL on arXiv!

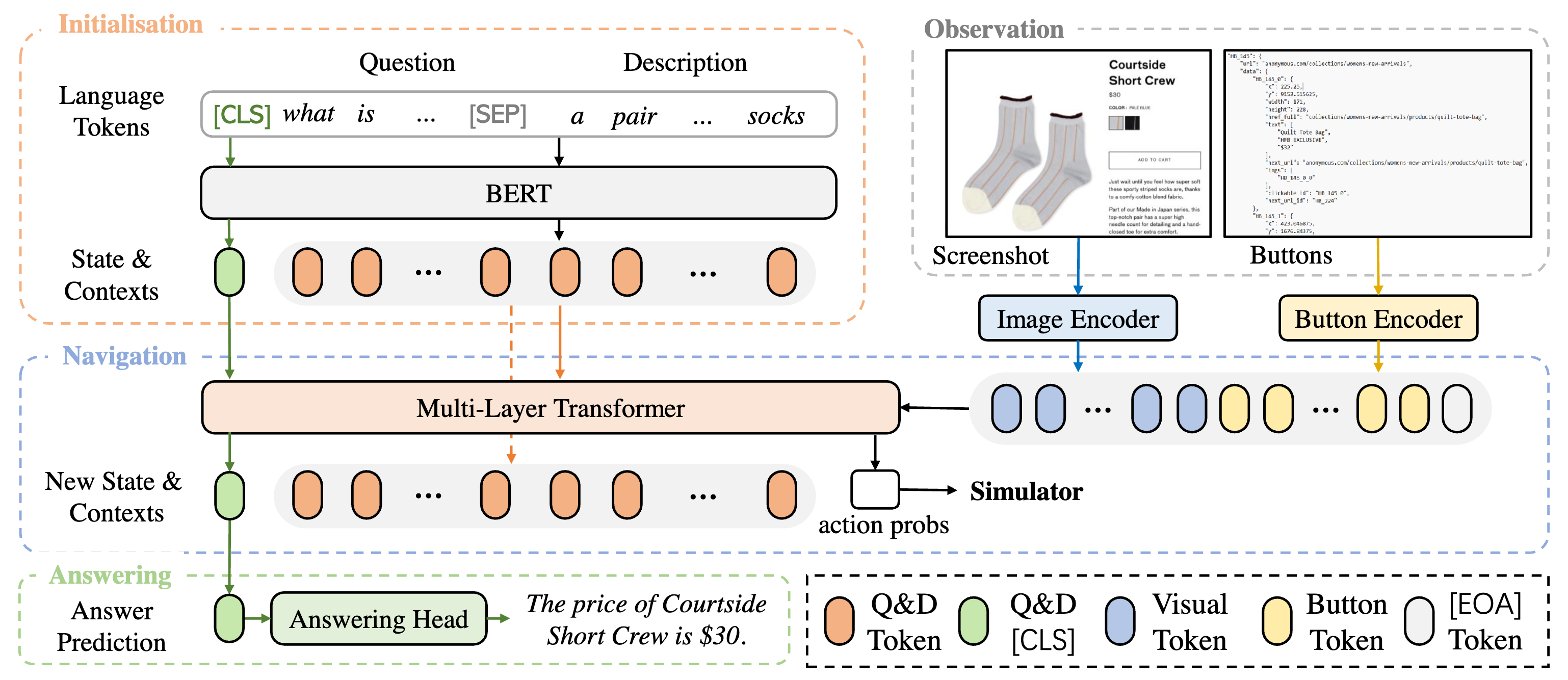

Meta-learning selective state space models (Mamba) for efficient continual learning.Dec 2023WebVLN is accepted to AAAI 2024. Thanks to all collaborators!

Vision-and-language navigation on shopping websites.Jul 2023MG-VLN is accepted to ACM MM 2023. Thanks to all collaborators!

Backtracking to passed correct locations via first-person video grounding for navigation.

Research 🌴

CVPR

CVPR ICML

ICML Preprint

Preprint Preprint

Preprint ACM MM

ACM MM AAAI

AAAI ICCV

ICCV